This Single-Exposure 3D Lensless Camera Could be the Future of Photography

News | By Stephan Jukic | September 15, 2022

Researchers at the University of California, Davis have managed to develop a camera that avoids bulky conventional optics in favor of a thin microlens array and uniquely new image processing algorithms to absorb 3D information about its surrounding objects with just one exposure.

The new camera isn’t something just anyone can pick up at their local electronics shop (at least not yet), but it could become very useful for a range of applications. These might involve exploratory and inspection photography, gesture recognition and 3D display applications among other things.

According to comments for the optical science journal Optica, from research team leader Weijin Yang from the University of California, Davis, “We consider our camera lensless because it replaces the bulk lenses used in conventional cameras with a thin, lightweight microlens array made of flexible polymer,”

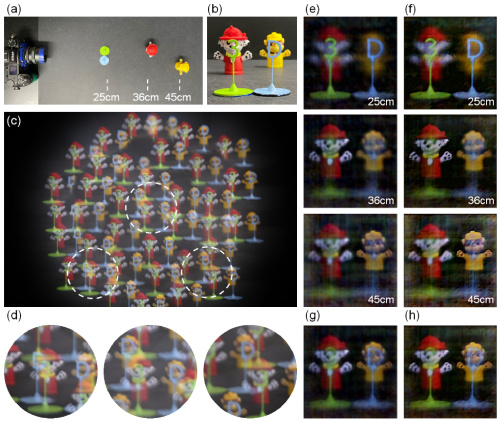

He further elaborates, “Because each microlens can observe objects from different viewing angles, it can accomplish complex imaging tasks such as acquiring 3D information from objects partially obscured by objects closer to the camera.”

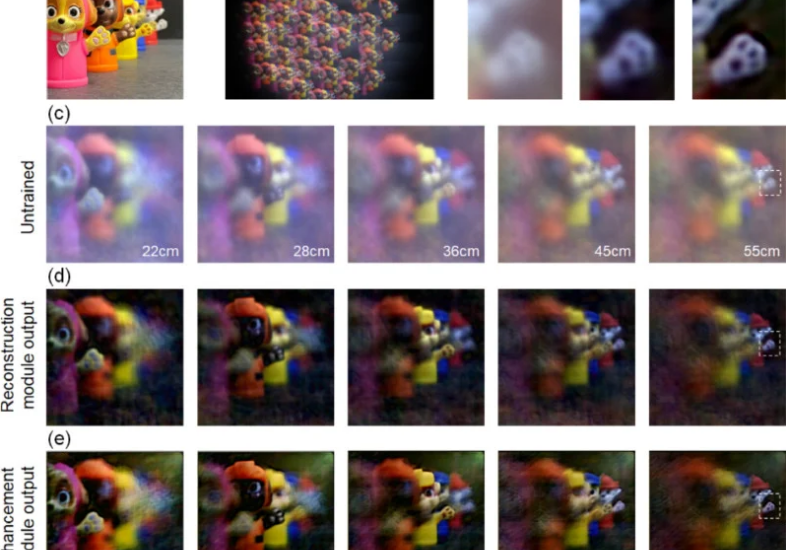

Yang and research co-author, Feng Tian, a doctoral student in Yang’s lab, explained that the new camera works by learning from existing data about a scene to digitally reconstruct it in 3D imagery in real-time.

Specifically, “This 3D camera could be used to give robots 3D vision, which could help them navigate 3D space or enable complex tasks such as manipulation of fine objects,” according to Yang. “It could also be used to acquire rich 3D information that could provide content for 3D displays used in gaming, entertainment or many other applications.”

Yang and Tian’s camera emerged from previous work in which the two researchers created a compact microscope for 3D imaging of tiny structures in biomedicine. As the researchers explain, the microscope also used a microlens array to render visuals and they then explored the possibility of using the same technology on a macroscopic imaging device.

Because the camera contains flexible individual lenses, it can use them to see objects from multiple perspectives for superior depth and shape information that’s visualized in 3D form.

Image Credits: Optica

Previous research efforts by others have used a more basic form of this technology in their own microlens arrays but with only one lens layer. Yang and Tian’s camera instead uses multiple lens layers for more precise imaging at speed. This was previously hard to achieve because of “extensive calibration processes” and slow photo reconstruction speeds.

Yang explains that for previous efforts at this image processing technology, “Many existing neural networks can perform designated tasks, but the underlying mechanism is difficult to explain and understand,” He then explains that now with his team’s camera and processing systems, “Our neural network is based on a physical model of image reconstruction. This makes the learning process much easier and results in high-quality reconstructions.”

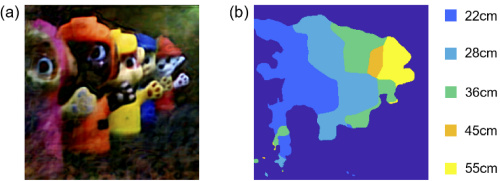

According to the researchers, once this imaging process is complete, it will rapidly develop images that contain and detail multiple objects at different distances from the camera. It will be able to develop these quickly because it doesn’t need complex calibration and can thus map the 3D locations and spatial profiles of objects.

What’s more, the camera is capable of creating 3D images of multiple objects at different depths in such a way that lets the resulting 3D photo be refocused to different accurate focal distances among the objects captured. For Yang this means that “our camera could image objects behind the opaque obstacles,” He also stated that “To the best of our knowledge, this is the first demonstration of imaging objects behind opaque obstacles using a lensless camera.”

With these capabilities, the camera could be used to give robots 3D sight in a way that lets them navigate complex spaces and perform complicated manipulative tasks much more easily. The camera could also have robots or probing devices scan concealed and inaccessible spaces in detail in ways that conventional cameras can’t.

Future uses for the camera technology could also very well end up in consumer electronics stores. Yang mentioned that “It could also be used to acquire rich 3D information that could provide content for 3D displays used in gaming, entertainment or many other applications,”

It’s worth noting that the camera lens array itself is only 12 millimeters across with a total of 37 microlenses distributed inside this space. This is the totality of the lens system used to capture an entire complex scene that the physics-aware deep learning algorithms behind the camera then resolve into images in real-time.

In other words, it is very possible that near future consumer cameras and smartphone lenses could incorporate the same technology for recreational shooting unlike any seen so far. This is in fact what Yang and his team specifically plan on working on next once they’ve reduced the artifact and error problems that their microlens camera still creates.

Yang’s full research paper is available on the Optica journal’s website for anyone interested in the deeper details.

Check out these 8 essential tools to help you succeed as a professional photographer.

Includes limited-time discounts.