How You Can Benefit from Computational Photography

Love it or hate it, computational photography is here to stay. Learn how you can benefit from it as a photographer in this insightful article.

Learn | Photography Guides | By Andy Day

Shotkit may earn a commission on affiliate links. Learn more.

Computational photography is bringing dramatic changes to how we create images, taking advantage of software and machine learning to enhance and extend technology’s ability to produce photographs.

This article looks at how it’s defined, its influence on how we create photographs, and how those developments could benefit you as a photographer.

What is Computational Photography?

The term computational photography describes the techniques being used to push our ability to create photographs, going beyond conventional image-making by using machine learning, algorithms, artificial intelligence, image stacking, depth mapping, and more.

These techniques give us the opportunity to produce images that might not have been possible due to the limitations of hardware, or perhaps simulate new images that borrow from a computer’s understanding of, for example, what makes a good photo, what a face looks like, or our idea of a perfect sky.

Computational photography has seen significant developments in the last ten years as technology companies have searched for ways to make smartphone cameras match — or even out-perform — traditional DSLR-style cameras, without adding huge lenses and physically larger sensors.

Smartphones have much greater processing power than conventional cameras. Companies such as Apple and Google have used this potential to try and overcome the limitations imposed by using such small sensors and lenses, but to bring other advantages that improve your photographs even before you have pushed the shutter button.

Software developers have also been hard at work, bringing new ways to edit and enhance our images.

12 Examples of Computational Photography (+ Benefits)

1. Change the weather

If you’ve just driven a hundred miles to an incredible location, the last thing you need is terrible weather.

With landscape photography so often dependent on the quality of light and being able to shoot in perfect conditions, software such as Luminar AI now gives you the tools to create distinctive weather when you sit down to edit your photographs.

With Luminar AI, you can add fog or create a golden hour glow with just a few clicks. You can even insert the sun itself and direct shafts of sunlight as it peaks from behind the crest of a mountain.

To some, this will feel like cheating, but not everyone has the time or resources to go on long trips and spend countless days on location in order to get perfect results.

You can read more about Luminar in our review.

2. Create beautiful bokeh on a smartphone

© Pordán Krisztián

Smooth, creamy backgrounds give a cinematic feel to an image but the small sensors inside a smartphone usually mean that you’ll be shooting with a lot of depth of field, creating photos that are sharp from front to back.

To overcome these restrictions, Apple and Google have pioneered portrait mode, each taking slightly different approaches to create very similar results: a pin-sharp subject, and a nice blurry background.

Both types of smartphone use data from the image to calculate how far away different elements of the photograph are (a process called “depth mapping”) and then blur out what’s furthest, creating a shallow depth of field.

3. Find the perfect composition

Having been trained by looking at countless images taken by professional photographers, Luminar 4 can now look at one of your photographs and recommend the perfect crop to give it the strongest possible composition.

Through its analysis of thousands and thousands of photos, the software has been taught what makes a good photograph and you can now ask it to apply that knowledge at the click of a button.

If this feels like, cheating, you could simply treat it as a learning tool or use it to get a few ideas before deciding on your own version.

See our review of Luminar 4 here.

4. Make a person smile, long after you’ve taken their photo

Imagine sitting down to edit a batch of photos and discovering that the one shot where everything came together perfectly is also the one shot where your subject isn’t smiling.

Adobe Photoshop’s Neural Filters now allows you to bring a smile to that person’s face with the help of a single slider.

Neural Filters work by comparing your image to a huge bank of images in the cloud, using this massive database to calculate what that person’s smile might look like.

The filter has only just been released and while the results aren’t perfect — they can be a bit unsettling, particularly if you know the person whose smile you are manufacturing! — it’s a sign of what’s coming in the future.

This feature and the various other AI-driven filters are available in the latest version of Photoshop to anyone subscribed to the Adobe Creative Cloud.

5. Enlarge your images without compromising quality

If you’ve just heavily cropped an image — say a photo of a bird in flight taken on a lens that wasn’t quite as long as you’d like — you may have just reduced its resolution quite dramatically.

Upscaling an image using software to regain some resolution and make it of high enough quality for printing has been a much sought-after feature in image manipulation software. Thanks to computational photography, you can now achieve some spectacular results.

There are still limitations but software such as Gigapixel AI from Topaz Labs has dramatically improved what’s possible, claiming to offer enlargements of up to 600%.

Rather than just using interpolation (i.e., some hardcore maths) to calculate the new pixels, Topaz Labs taught Gigapixel AI using thousands of photographs of low and high-resolution images so that it learned how to recognise certain structures within in image.

Gigapixel AI then draws on this knowledge to upscale images, producing photographs that are sharper and with fewer artefacts.

Adobe has recently introduced Enhance Super Resolution to its Camera Raw software, allowing you to quadruple the size of a raw file, again using its experience of understanding millions of photographs.

See also: how does Super Resolution work?

6. Change a person’s age

The Neural Filters in Adobe Photoshop take your image and compare it with millions of photographs in the cloud, allowing it to make a guess of how a person would look were you to shave off a decade or two or look into the future.

The quality of the resulting photograph is alarming, retaining enough of the original features to keep high-resolution details.

Photoshop has only recently introduced this feature and yet the results are remarkable.

See this guide for the various ways to get hold of Photoshop.

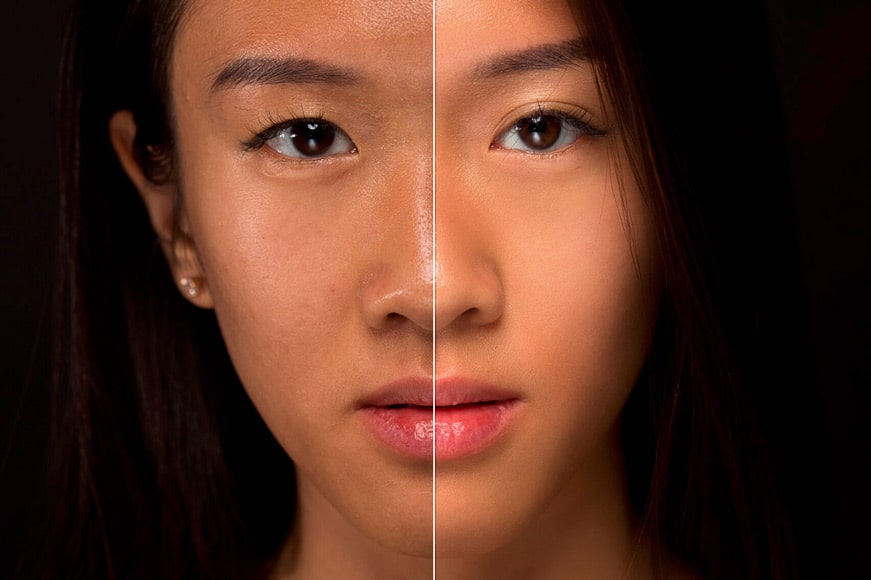

7. Perfect a portrait in seconds

Removing shadows from under a person’s eyes and giving their face a healthy glow is a retoucher’s art — until now.

Software such as Luminar AI gives you sliders to instantly remove shadows and wrinkles, and you can even plump up lips and enlarge eyes.

The tricks used to make models on billboards look perfect are now available to everyone.

8. Stitch a panorama and get creative

Early versions of Adobe Photoshop included the ability to draw on the software’s intelligence to know how to stitch a series of photographs together to create a panorama, but this was often a complicated process that created some occasionally clunky results.

Fast forward a few years and Lightroom can now blend raw files together in a matter of a few clicks – here’s a list of the top tools for stitching together photos into panoramas.

However, smartphones have been even more advanced for several years now, taking advantage of the smaller file sizes and faster processors. Even the cheapest smartphones will create a panorama together on the fly, stitching the image together as you pan your phone.

It doesn’t have to be landscapes: you can stitch together interiors where your lens isn’t wide enough to take in an entire room, or you could try out the Brenizer method to create a unique-looking portrait.

This technique involves using a telephoto lens and shooting a number of photographs to give the impression of a much wider angle lens but creating an otherwise-impossibly shallow depth of field to give a distinctive, cinematic feel to an image.

9. Fix a blurry, out of focus photo

Having a photograph that’s not quite as sharp as you’d like it to be is immensely frustrating and software developers have long been trying to tackle this problem. Whether it’s because of motion blur or missed focus, correcting it has been impossible — until now.

Computational photography brought some notable progress as AI has been able to make better estimations of what detail should be added pixel-by-pixel to make an image sharper – a software called Sharpen AI from Topaz Labs produces some impressive results.

Topaz Labs taught its software using millions of photographs and Sharpen AI (review) uses this knowledge to generate an improved image.

The next stage for AI-fuelled sharpening might be to draw on a bank of images stored in the cloud, not simply to apply some sharpening and noise reduction, but to actually generate elements within the image that simply weren’t there before.

Apps like Remini already show how this is possible, and no doubt this will be incorporated into image-editing software more broadly in the near future.

10. Swap out the sky for something better

High-end real estate photography used to be about waiting for perfect skies before heading out to shoot a property, and photographers have long been swapping out skies to get around this restriction.

With computational photography, this is now even easier, with software packages such as Photoshop, ON1 Photo RAW and Luminar AI automating the process for you and offering a library of different skies from which to choose.

11. Create perfect skin

Crafting perfect skin is an art and avoiding making a model look as though their face were made from rubber usually involves complex techniques such as frequency separation, along with a lot of detailed cloning work.

Thanks to various modern editing apps, all of that hard work has been eliminated.

Computational photography allows software to remove blemishes and smoothen skin without eliminating all of the texture that keeps it looking realistic.

For high-end work, hardcore professionals will stick to Photoshop and countless layers, but the rest of us will be blown away by what these incredible new apps have to offer.

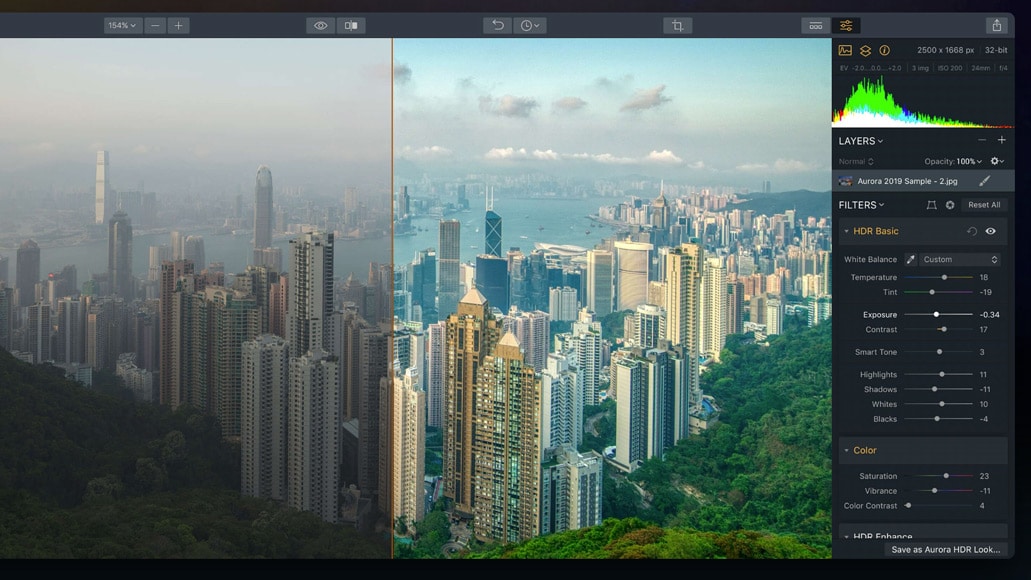

12. High Dynamic Range photography

HDR photography involves using a number of almost-identical images of the same scene but shot at different exposures, and then using software to blend them together.

This allows you to see the darkest shadows and the brightest highlights within one image that would otherwise have been lost at one extreme or the other.

HDR has evolved dramatically thanks to smartphone technology. For instance, did you know that many phones are now taking photographs and filling up a buffer with images long before you’ve taken a photograph?

This allows the phone to intelligently assess the scene and if there’s too much contrast, it can construct an image using multiple exposures, eliminating noise and even motion blur introduced as a result of not being able to hold the phone still enough.

Final Words

Computational photography is providing tools that are transforming the processes that we use to create images.

It’s never been so easy to create stunning results, taking advantage of machine learning to make changes to our photographs that in the past would have required hours and hours of work and a high level of expertise.

We’ve only touched on a few of the ways that computational photography is bringing changes so feel free to leave other examples in the comments below, and don’t hesitate to get in touch if you have any questions.

Check out these 8 essential tools to help you succeed as a professional photographer.

Includes limited-time discounts.

Thank you to Petapixel for linking to this article in their weekly “Great Reads In Photography” feature. 😊

Hard not be amazed at the direction photography is taking: How to falsify information! This love affair with fake things is not good for photography or society.

I think people were saying the same thing back in the 1830s…! 😊